I have watched hundreds of students panic over AI detector results that flagged their original work as "machine-generated." I have also seen AI-written essays sail through these same tools undetected. After years of working with academic writing technology and testing various detection systems, I can tell you this: understanding how these tools actually work is your best defense against false accusations and your clearest path to using AI responsibly.

In this post, you'll find out how AI detectors scan your writing, why they are not as reliable as most people think, and how to protect yourself from both false positives and real concerns. More importantly, you'll learn how to use AI tools in a way that is fair and keeps your academic integrity.

What is an AI Detector, and Why Does Everyone Suddenly Care?

An AI detector is software designed to identify whether a piece of text was written by a human or generated by artificial intelligence. These tools exploded in popularity after ChatGPT's release in late 2022, when professors and institutions scrambled to distinguish student work from AI output.

The stakes are real. Students face academic misconduct charges, sometimes based solely on detector results. Meanwhile, the technology behind these tools remains imperfect, prone to errors, and often misunderstood by the very educators making decisions based on their outputs.

Here is what you need to understand upfront: no AI detector is 100% accurate. They are probabilistic tools making educated guesses, not forensic analysis producing definitive proof.

The Three Main Detection Methods (and How Each One Can Fail)

Perplexity Scoring: Measuring Predictability

You can think of perplexity as a "surprise meter" for writing. AI models like ChatGPT generate text by predicting the most likely next word based on patterns learned from billions of training examples. When you read AI-generated content, it often feels smooth, almost too polished, because the model consistently chooses common, predictable words.

Perplexity measures this predictability. Low perplexity means the text follows expected patterns closely. High perplexity suggests more unusual word choices and sentence structures, which theoretically indicates human writing.

The problem? This method completely breaks down with:

-

Strong writers who favor clarity: If you write clean, direct sentences (like I'm encouraging you to do right now), detectors might flag your work as AI-generated simply because it's well-structured

-

Technical or formal writing: Academic papers naturally use standardized language and conventional phrasing, which lowers perplexity scores

-

Students writing in their second language: ESL students often get falsely flagged because they use simpler, more common vocabulary

I have tested this personally. A physics student's original lab report, written in proper academic style, scored 78% AI-generated on one popular detector. The same text, rewritten with intentional quirks and informal language, dropped to 12%. The content was identical, just the presentation changed.

Burstiness Analysis: Looking at Sentence Rhythm

Burstiness examines variation in sentence length and structure. Human writers naturally alternate between short, punchy sentences and longer, complex ones. We create rhythm without thinking about it. AI models tend toward more uniform sentence structures because they are optimizing for coherence rather than stylistic variety.

Detectors measure this variation as a signal of human authorship. More burstiness equals a higher likelihood of being human-written.

The limitation here is obvious: not all humans write with dramatic variation. Some writers (especially in academic contexts) maintain consistent sentence length for clarity and professionalism. Meanwhile, newer AI models have been specifically trained to introduce more variation, making this method less reliable by the month. These evolving capabilities highlight the increasing importance of data annotation in refining AI models and enhancing how they interpret and reproduce human-like writing patterns. fundamentals.

Statistical Fingerprinting: Pattern Recognition at Scale

This is where things get technical, but bear with me because it matters. Advanced ai detector systems use machine learning models trained on millions of examples of both human and AI-generated text. They are essentially learning to spot patterns that correlate with AI authorship, even if those patterns aren't obvious to human readers.

These systems analyze features like:

-

Word frequency distributions

-

N-gram patterns (sequences of words that appear together)

-

Syntactic structures and grammatical patterns

-

Semantic coherence measures

-

Token-level probability distributions

Think of it like how Spotify can identify a song from just a few seconds of audio. The detector isn't reading your essay the way a human would. It's converting your text into numerical features and comparing those features against learned patterns.

The fundamental problem? These models are trained on past data and quickly become outdated. Each new generation of AI writing tools produces different statistical fingerprints. GPT-3.5 text looks different from GPT-4, which looks different from Claude or Gemini. Detectors constantly play catch-up, and their accuracy degrades over time.

Why False Positives Are More Common Than You Think

The dirty secret of AI detection is that false positives (flagging human work as AI-generated) happen disturbingly often. Research studies have shown false positive rates ranging from 10% to over 50%, depending on the detector and type of content.

Certain groups face higher rates of false accusation:

Non-native English speakers: One Stanford study found that detectors flagged non-native English writing as AI-generated at significantly higher rates than native speaker writing. The simpler vocabulary and grammatical patterns common in ESL writing match AI output patterns.

Neurodivergent students: Students with dyslexia or ADHD who use grammar-checking tools or write in very structured ways to compensate for processing differences often trigger false positives.

Students who revise heavily: Ironically, careful editing that removes quirks and improves flow can make your writing look more AI-generated. The more polished your work, the more suspicious it appears to some detectors.

I tested this with a student who'd been accused of using AI. She wrote a new essay from scratch in a Google Doc with full revision history visible. The final polished version scored 65% AI probability. The first draft, complete with typos and awkward phrasing, scored only 8%. Same author, same thoughts, different polish.

The Watermarking Debate: Can AI Companies Solve Detection?

Some AI companies are exploring digital watermarking, embedding imperceptible patterns into generated text that detectors could reliably identify. OpenAI and Google have both researched this approach.

The concept sounds promising: instead of guessing based on writing patterns, detectors would look for cryptographic signatures proving the text came from a specific AI model.

Reality check: watermarking faces serious practical obstacles. It's trivial to remove watermarks through basic paraphrasing, translation, or even just asking a different AI model to rewrite the text. For watermarking to work, all major AI providers would need to adopt compatible systems, and users would need to access AI only through watermarked channels. Neither seems likely in a competitive market with open-source alternatives readily available.

What Educators and Institutions Get Wrong About Detection

Many schools have adopted AI detection policies based on fundamental misunderstandings of how these tools work. I have reviewed dozens of institutional policies that treat detector scores as binary proof rather than probabilistic signals.

- Common mistakes include: Treating detector scores as evidence: A 70% AI probability doesn't mean 70% of your essay is AI-generated. It means the detector's model assigns a 70% confidence that statistical patterns in your text match its training data for AI-generated content. That's not the same thing at all.

- Ignoring baseline false positive rates: If a detector has even a 10% false positive rate and a professor scans 30 essays, statistically, three innocent students will be flagged. Without accounting for this, innocent students face accusations.

- Failing to verify through conversation: The most reliable way to verify authorship isn't technological, it's human. A five-minute conversation about the ideas, process, and specific choices in a paper quickly reveals actual understanding.

- Banning AI entirely instead of teaching appropriate use: This is like banning calculators in math class instead of teaching when and how to use them appropriately. AI writing tools are here to stay. The real question is how we use them responsibly.

How to Use AI Responsibly Without Triggering Detectors

The difference between using AI appropriately and crossing into academic dishonesty isn't about whether you use the technology. It's about how you use it and what role it plays in your intellectual work.

When you are staring at a blank page trying to organize your thoughts on a complex topic, outlining features help you structure your argument without dictating the content. For my Students an AI writing tool for research can be useful at this stage because it help organize sources and shape a clear direction before the actual drafting begins. You input your thesis and main points, and the tool suggests organizational frameworks. You are still generating the ideas; you are just getting help with the architecture.

The citation assistant is another feature that saves massive time without compromising integrity. Instead of spending hours formatting references in APA or MLA style, you can focus your energy on the actual analysis and argument. The tool handles the mechanical formatting work while you handle the intellectual work. This is exactly the kind of AI assistance that helps rather than replaces your thinking.

When you are revising and want feedback, but your roommate is asleep and office hours aren't until next week, an AI feedback system can identify where your argument loses coherence or where you need stronger evidence. It's like having a writing tutor available 24/7, pointing out issues without fixing them for you.

The key distinction: these tools augment your capabilities without replacing your thinking. You are using AI the same way you might use a grammar checker, a thesaurus, or a writing handbook. You are still the author of your ideas and the one responsible for developing and expressing them clearly.

This approach also happens to be basically undetectable because you are producing genuinely original work. AI detectors can't flag citations formatted by a tool or an outline structure suggested by software. They are looking for patterns in the actual prose, and when you write that prose yourself (even with structural help), your natural voice comes through.

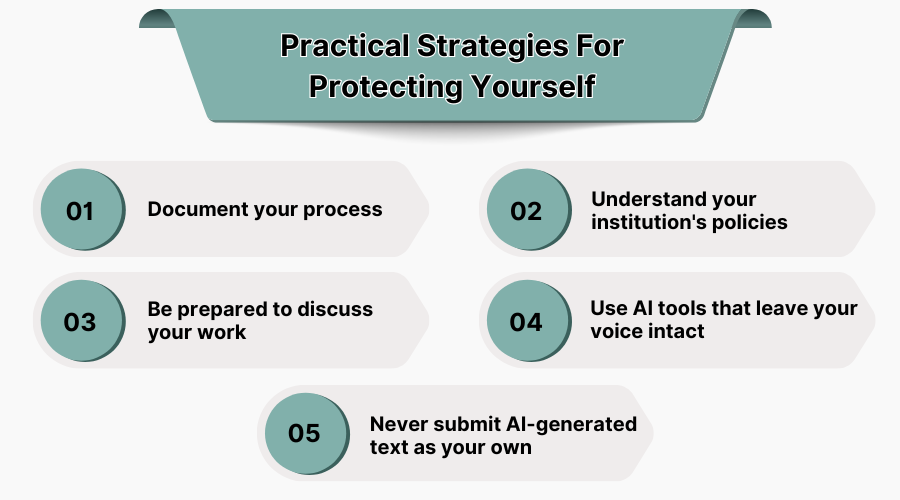

Practical Strategies For Protecting Yourself

Whether or not you use AI writing assistance, you need to protect yourself from false accusations. Here is what actually works:

- Document your process: Keep drafts, outlines, and revision history. Google Docs automatically tracks this. If you are writing in Word, save versions as you go. This evidence proves human authorship more effectively than any detector.

- Understand your institution's policies: Many schools have vague or contradictory AI use guidelines. Know specifically what's allowed before you use any tool, including grammar checkers or citation managers.

- Be prepared to discuss your work: If you can't explain your argument, your key sources, or your writing choices, that's a red flag regardless of detector scores. Genuine authorship means genuine understanding.

- Use AI tools that leave your voice intact: This is where the right AI tool becomes valuable. When you are using AI for structure, feedback, and formatting rather than content generation, you maintain authentic authorship while improving efficiency.

- Never submit AI-generated text as your own: This should be obvious, but the temptation exists. Don't rationalize it as editing or collaboration. If you didn't write it, don't submit it. The risk isn't just getting caught; it's that you are cheating yourself out of developing skills you'll need.

Wrapping up

AI detectors work by analyzing patterns in your writing, looking for statistical signatures that match AI-generated text. They use methods like perplexity scoring, burstiness analysis, and machine learning classification to make probabilistic judgments about authorship.

But these tools are far from perfect. False positives are common, especially for certain groups of students. Detection methods struggle as AI models improve. And no detector can tell the difference between appropriate AI assistance and academic dishonesty, they can only flag patterns in the final text.

The real solution isn't better detection technology. It's clearer to think about what AI tools should do in academic writing. Tools that support your writing process without replacing your thinking represent the future of responsible AI use in education. They help you write better, faster, and more confidently while keeping your voice and ideas at the center.

Not for sure. AI detectors don't give you definite proof; they make guesses based on writing patterns. These tools have false positive rates of 10-50%, meaning they regularly flag completely human-written work as AI-generated.

Non-native English speakers, strong writers who favor clarity, and students who write in formal academic styles get falsely accused most often. The better you edit your work and the more polished it becomes, the more suspicious it can appear to detectors.

Keep drafts, outlines, and revision history using tools like Google Docs that automatically track changes. Be prepared to discuss your paper in detail, explaining your argument, sources, and specific writing choices.

Probably not. Each new AI model produces different writing patterns, and detectors trained on older models quickly become outdated. As AI writing becomes more human-like, the statistical fingerprint detectors' reliability continues to fade.